Thanks Andrea Deluxe.

Compare to 7970 xfire

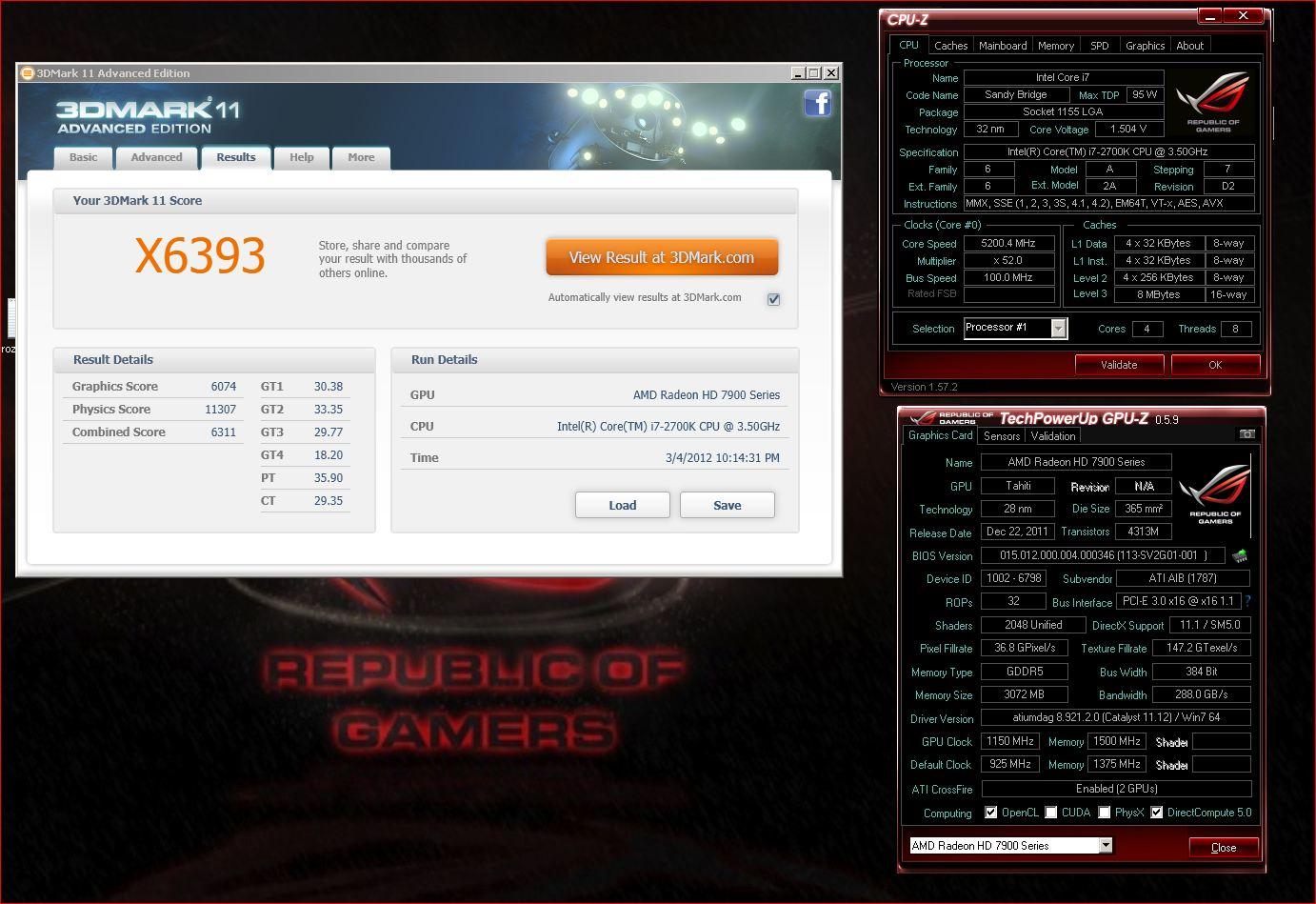

GTX 680 OC SLI @ 1150/1800 -VS.- HD7970 OC Crossfire @ 1150/1500

Thanks Andrea Deluxe.

Compare to 7970 xfire

GTX 680 OC SLI @ 1150/1800 -VS.- HD7970 OC Crossfire @ 1150/1500

[SIGPIC][/SIGPIC]Bring... bring the amber lamps.

They not want to run games then? /:

"Cast off your fear. Look forward. Never stand still, retreat and you will age. Hesitate and you will die. SHOUT! My name is "

//James

i dont think i like what i see about the clocks in these images. it looks like it keep jumping between 700 and 1150, or its just updating one point on the chart as it goes between benchmarks and not tracking anything in the background while the benchmarks are running. im guessing its the latter, but then it should be pretty simple to turn on "log while in background" like any normal person does.

2500k @ 4900mhz - Asus Maxiums IV Gene Z - Swiftech Apogee LP

GTX 680 @ +170 (1267mhz) / +300 (3305mhz) - EK 680 FC EN/Acteal

Swiftech MCR320 Drive @ 1300rpms - 3x GT 1850s @ 1150rpms

XS Build Log for: My Latest Custom Case

that 2 way sli seems ok for those two cards, depending on the price they're released at.

thats a pretty sizable difference, considering the cpu differences. gpu score is 250pts higher for amd, even though total score is close

i think many of us are hoping these 2 gpus trade blows all over the place, should finally give us a price war once again like the 4800/280 days.

2500k @ 4900mhz - Asus Maxiums IV Gene Z - Swiftech Apogee LP

GTX 680 @ +170 (1267mhz) / +300 (3305mhz) - EK 680 FC EN/Acteal

Swiftech MCR320 Drive @ 1300rpms - 3x GT 1850s @ 1150rpms

XS Build Log for: My Latest Custom Case

and at the end benefit customers from both camps.... Amen.

Hype came down in the end. And this will bring us better prices so two ways setup will be less prohibitive

This is just making me long for the GK110 more. Which is kinda bad.

Looks like pretty much 1:1 with 7970. Lets hope the price tag comes smaller.

Looks like I will be waiting for the big dog, while both 7970 and 680 look to be great cards still wont get it done for me as my next purchase Im looking for a single gpu that out performs my 480's. Some of the new features they are using look very promising, interested to see how it all comes down even if it isnt the right card for me.

AsRock P67 Extreme4

2500K@4.8 1.37v 24/7 EK supreme HF

8Gb G-skill RipjawsX 1866/Cas8

EVGA GTX670 FTW

Creative XFI titanium

Corsair TX 850 PSU

G-skill SSD,Boot/games

W-D Black, storage

Coolermaster HAF/X

Acer 27in. 120hz

Nvidia for sure will make a dualie out of this. Nice card.

I'm still waiting for something worth replacing my GTX295 Quad-SLI (DX11 notwithstanding). Damn I got my money's worth out of those cards.

Just to clear things out:

Since this is not the high end chip (being a GK104 part) of nVidia's new family of cards why the hell do they name it (GTX 680) and price it (>$500) as such? And since they do so what does that say about the upcoming supposed flagship (GK110)? Will it ever see the light of day or nVidia seeing that she has no reason to release it (since AMD doesn't give much of a competition) will simply let it be on papers alone?

When "politics" are so *strongly* involved with hardware development you know you're not in a good situation...

Because as it stands, this is their high end. Until they decide to release GK110 and adjust prices, this is what we have. I'm hoping for a price battle now...

As we have no verified information on the GK104, or GK110, do you think we can assume the GK104 was not intended to be the high end part all along? What if GK110 is a dual GPU version? What if it never existed, or was a design that got scrapped?

Based on the supposed benches we've seen, the rumored 680 looks to be outperforming the 7970 by about the same margin as the 580 > 6970, the 480>5870. Seems in line with the last two launches?

Hard to say without knowing what it is/was, or if it existed ever. My thought is that when I go to buy video cards, I look at what's for sale and evaluate, not what companies are rumored to have been working on.

What do you mean by this? I'm guessing you're substituting "politics" for "business" based on the context.

If so, you will never be in a "good situation" as you put it. Here's why:

Let's say next time around NVIDIA launches Maxwell first and ATi finds themselves in the position they have three parts they can launch that week, one that beats it by 20%, one by 50%, and one by 100%.

If you launch the 100% faster card you a. make the amount of time you have to work on it's successor as short as possible (bad) b. raise expectations for it's successor (bad) c. get one launch at top tier price instead of possibly three (bad).

Your problem is your expectations are from a consumer perspective, not a business perspective. You want as much as possible as fast as possible for as little as possible. Companies only want to maximize profiit because that's what keeps the lights on and the engineers working.

I think I can almost guarantee you that every product that launches there were alternate designs that were scrapped or put on hold because they were either to expensive to bring to market, couldn't be delivered in a timely fashion, or just didn't need to be brought to market based on reason above.

One reaction might be "Oh man, what about the parts that might have been?!". Another might be, "Let's look at what we have and evaluate merits".

Intel 990x/Corsair H80 /Asus Rampage III

Coolermaster HAF932 case

Patriot 3 X 2GB

EVGA GTX Titan SC

Dell 3008

Finally someone who gets it.

In a business point of view, it is a massive improvement for Nvidia, as they did not need a 500+ mm2 chip to match or beat the competition this time around. They will sell their high end chip with a significantly improved margin from previous designs.

This is a a huge win for the company, now in the same ballpark as AMD with efficient chips which don`t require a 1000W+ PSU to run SLI/Crossfire.

Gigabyte Z77X-UD5H

G-Skill Ripjaws X 16Gb - 2133Mhz

Thermalright Ultra-120 eXtreme

i7 2600k @ 4.4Ghz

Sapphire 7970 OC 1.2Ghz

Mushkin Chronos Deluxe 128Gb

Anyone tested the idle wattage of 680 yet? Have they implemented something similar to amd??

Reeealy hope this ends up like the 8xxx/ hd4xxx series price war! New cards to last 3/ years again please

"Cast off your fear. Look forward. Never stand still, retreat and you will age. Hesitate and you will die. SHOUT! My name is "

//James

My expectations is to have a healthier industry which is not artificially slowed down. No different than what we had 5 years ago when nVidia rolled out 8800GTX even though the 8800GTS was enough to absolutely kill the competition. If you have what is best -out there- the sooner, the better it is for the whole industry (from a technical point of view) given that the industry will *have to* move faster.

Sure nVidia is also business out to make a profit but also she is the benchmark of hardware development on home computers which has actual, tangible effect on our lives. In short the faster we get the best parts out in the marker, the faster the industry will evolve and the faster it will have solutions to whatever problems may come about. Home computing (on in itself) is a luxury no different than a car yet the development done there affect the society at large multiple times more than any super-car would.

Just think of the applications that such computing is starting to have for medical purposes, scientific purposes. At first we'll get a few more frames, but the side-effect (of the development that gives a few more frames per second) is huge and cannot be ignored. For more purposes than one we have a supercomputer stored in the tiny shell of our GFX cards and the faster it develops the better it is for all...

What can make the industry like it was (is the real question), not why nVidia doesn't release its high end parts (if there are those around to begin with).

That's another common misconception. That the flow of tech is entirely within the control of companies and they can do things when they "have" to. Look at last years Bulldozer launch, or the FX5800 launch for all the evidence you need this isn't true. These things are high tech inventions and you can't just tell your engineers "Invent faster and better! Our competitors have a lead!". Besides which things like the state of the fab process and cost of VRAM and silicon come into play. Maybe in the infancy of 28nm yields on 500mm chips are too low to sell them for less than a thousand a piece. Maybe it makes more sense with current yields to sell 300mm (rumor) at $500(unknown) than 500mms for $600 due to bigger margins on the 300(rumor). You're still thinking like a consumer and "What is best for me is also best for the industry" when the two are unrelated.

History is littered with defunct companies that had one good idea. NVIDIAs responsibility is to the stockholders to provide a profit on investment over the long term. Who knows? Maybe they have a better chip they're holding back because it's all they have in the works now that looks feasible and they want to buy time to come up with something else. Maybe the rumored 680 is what they planned all along for the 680, the best they had come to fruition. Maybe the fabled "better chip" couldn't be brought to market now, and even if it was a 100% increase on 7970, a Q4 sale date would have meant a years lost sales and response "Geez Louise, in 10 months it BETTER be 100% faster! Late!". We'll never know. (and BTW in the life cycle of the development time of these chips 10 months is nothing- time for a couple respins only- main design happens over many years)

This I can't answer. (why there are occasional huge leaps like the Athlon X2, the C2D, the 9700Pro, and the 8800GTX) My guess is they're the product of rare intersections in business/invention/market conditions.

I do know this. Anyone who's a gamer has a LOT of reasons to be excited about Kepler if the rumors are true. Leading performance, lower power, new AA, new vsynch, 4 monitor output, PhysX, 3D Vision, forced AO, CUDA.

If all the rumors are true the only reason I can think of anyone caring about a 7970 at all anymore is if they are one of the eight people on the planet with a 75X16 display set, or their decision hinges on the Skyrim texture pack and high AA at high res. Going to be a VERY small market for 7970s in that case.

Last edited by Rollo; 03-18-2012 at 10:24 AM.

Intel 990x/Corsair H80 /Asus Rampage III

Coolermaster HAF932 case

Patriot 3 X 2GB

EVGA GTX Titan SC

Dell 3008

Answering to Steve:

There are two problems with your assumption:

1- you assume there actually is a massive gk110 chip in the works and being purposefully held back by Nvidia.

2- you assume that Nvidia must always make huge 500mm2 chips in order to make their customers happy.

Maybe a big chip does not even exist and the infamous high end from Nvidia is just a dual gk104. How are you so sure that they are purposefully holding back?

Gigabyte Z77X-UD5H

G-Skill Ripjaws X 16Gb - 2133Mhz

Thermalright Ultra-120 eXtreme

i7 2600k @ 4.4Ghz

Sapphire 7970 OC 1.2Ghz

Mushkin Chronos Deluxe 128Gb

as an AMD/ATI fanboy i am very excited about kepler

their perf/mm2 and power draw is sounding extremely good and enough make us wonder what they could do with 300W on their bigger chip.

even if i were to still buy a 79xx i would want kepler to force a price drop of 20% or more in the next 2 months. and considering keplers size and specs, they could easily undercut the 79xxs since their gpu has specs that look close to a 78xx (but with a much higher perf)

2500k @ 4900mhz - Asus Maxiums IV Gene Z - Swiftech Apogee LP

GTX 680 @ +170 (1267mhz) / +300 (3305mhz) - EK 680 FC EN/Acteal

Swiftech MCR320 Drive @ 1300rpms - 3x GT 1850s @ 1150rpms

XS Build Log for: My Latest Custom Case

They really really fixed the memory controller this time around. I think as along as the chip trades blows with the 7970, the hype has been met.....if it wasn't for the damn pricing. Yeesh. It totally screams price fixing I think.

I would hope Nvidia would put some pressure on AMD, because the card has significantly less memory and their die size is 20% smaller. $450 would be completely inline of what the gtx 460 and gt8800 were if it performed up to 7970 with the smaller die and less memory.

If it is priced at 550, AMD doesn't have to do a darn thing and is something AMD can be happy about and it seems like price fixing is afoot.

GK110, seems like it would be a beast card though if it was released today, it seems like a card if released today would be very much like the gtx 8800 making AMD's current generation look last generation. Releasing it later makes sense for Nvidia, releasing it later allow GK104 to have the gtx x80 moniker and allows the chip to be marketed at the price it is being released at. AMD must be also happy gk110 isn't out. It would look bad if gk110 had similar performance to a 7990 dual GPU card, which it should if gk104 performs similarly to the 7970.

Last edited by tajoh111; 03-18-2012 at 11:13 AM.

Core i7 920@ 4.66ghz(H2O)

6gb OCZ platinum

4870x2 + 4890 in Trifire

2*640 WD Blacks

750GB Seagate.

I'm not really... at my last sentence I did make this clear ("*if* such a chip exists"). Traditionally though nVidia has in the works a big chip and in this generation this doesn't seem to be the case. Either they had problems on developing that (big) chip, or they changed their strategy (focusing only on medium chips), OR they hold their product away from the marker purposefully. Since I'm not an insider I cannot know which of those is the truth, what I do know (assume in fact) is that if they indeed hold their product from the market (*if* they do that) then it's a damn shame which may even affect them negatively in the long term (the 8800GTX affair made nVidia queen of sales for that 2-3 years period following that release, if history has taught us something is that if you have a strong lead on the market you better show it)...

Anyhow like I said I don't know and I cannot know for sure, traditionally though the even numbers on nVidia's counting system (GF 4xx, GF6xxx, GF 8xxx, GTX 2xx, etc) were denoting an overhaul in architecture and an almost 100% increase in performance compared to the last (even) generation. While the odd numbers were denoting smaller changes (a bit like Intel's tick). This generation around, that symmetry appears to have been broken (which is also what lead me to believe that nVidia actually do/did have a big chip on the works).

Last edited by Stevethegreat; 03-18-2012 at 11:17 AM.

Bookmarks