Hi There.

(recommend you resize your web browser to accomodate displaying the benchmark graphs side by side)

First post, be niceI'm a storage noob. Got my hands on a number of different SSD units that I thought it would be fun to benchmark:

1) Qty 2 Intel X25-E (32GB, SLC)

2) Qty 3 Intel X25-M (80GB, MLC)

3) Just for kicks, included an Apple (Samsung) 128GB SSD (MLC)

I didn't want to rip apart my main rig, so all testing was done with spare parts I had lying around: Gigabyte P35-DS3R (Intel ICH9R), Q6600, 4GB DDR2-800 running XP, SP3 and the latest Intel Storage Manager drivers from Oct 2008.

The goodies:

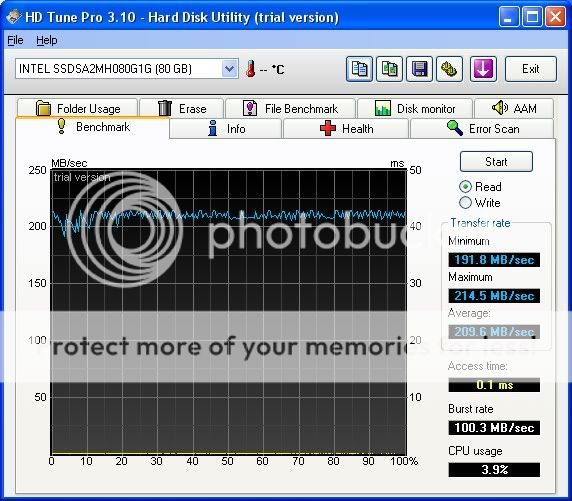

First let's start with single drives. According to the well known Anandtech article, the ICH9R had a 80MB/S cap on throughput. I believe this NOT to be an issue anymore, as I saw transfer rates well above 80MB/Sec. For example, here is the read rate for the X25-E vs the X25-M. You'll see that the M has slightly highly read rates than the E, a theme I found consistent across all of my testing.

Single Drive Benchmarks

X25-E (left) vs X25-M (right)

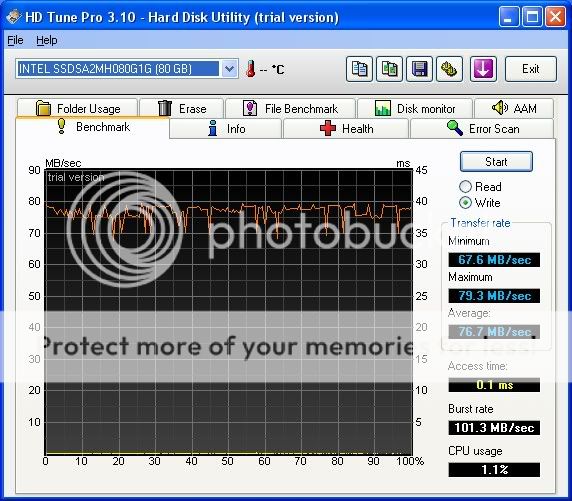

Writes, on the other hand are a different story. The E drive blows away the M in terms of writes.

X25-E (left) vs X25-M (right)

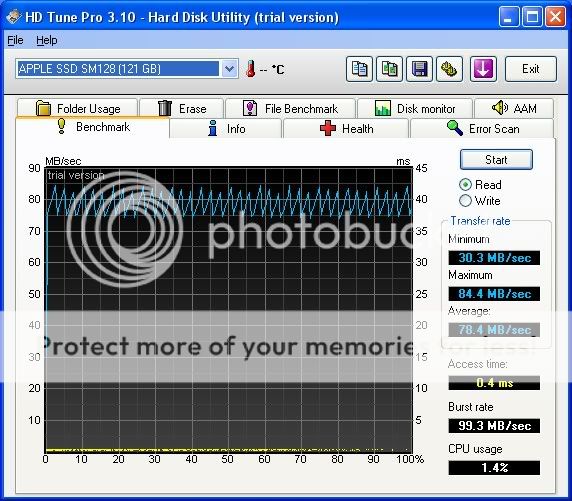

And just for fun I included an Apple Branded Samsung SSD I have from my Macbook. This is a 128GB MLC unit that is currently shipping with the new (late Oct 2008) Macbooks. I'll compare it to the Intel X25-M.

Intel X25-M (left) vs. Apple/Samsung SSD (right)

Umm ok, it's fair to say that the reputation that the Intel SSDs have is well deserved

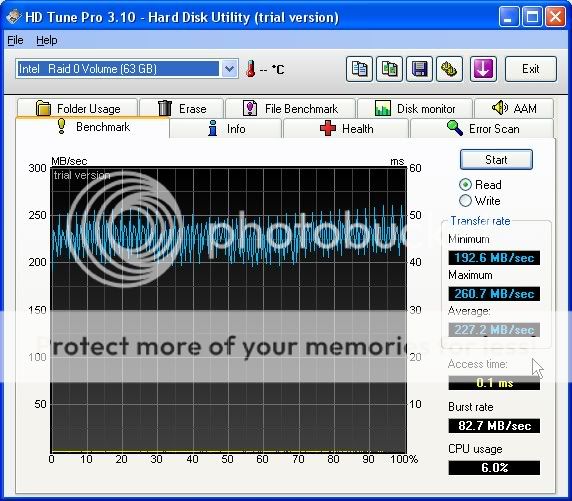

Ok let's move on to the RAID 0 benches.

Raid 0 Benchmarks

Configuration: Raid 0, 128K stripe, ICH9R. I started with the "default" of 128K. I found that enabling the volume write cache on the Intel driver made a significant difference. Results with the volume cache on and off:

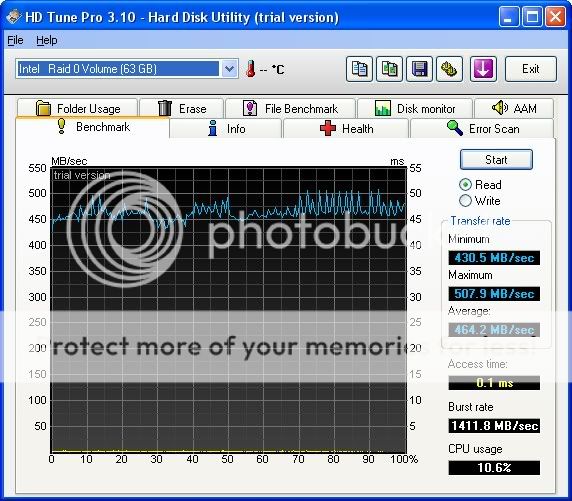

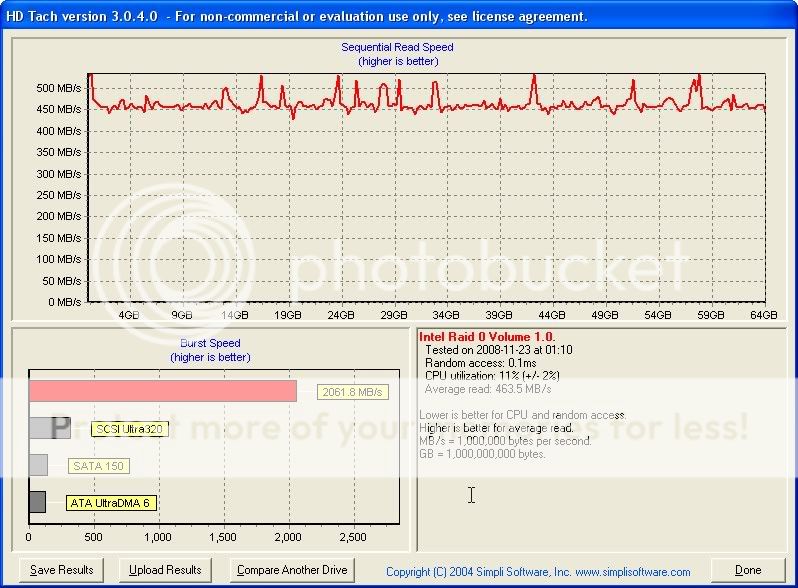

X25-E, 128k stripe, no cache (left) vs X25-E, 128k stripe, cache on (right)

Because of the significant improvement in performance, I left the volume cache enabled for all subsequent testing.

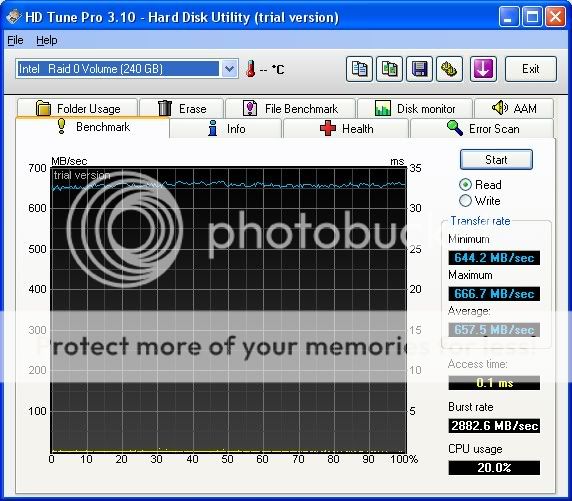

Intel X25-E (left) vs Intel X25-M (right) -- Read

As we saw with the single drives, we the X25-M performs slightly better than the X25-E in terms of read rates. Again, writes completely reverse the story:

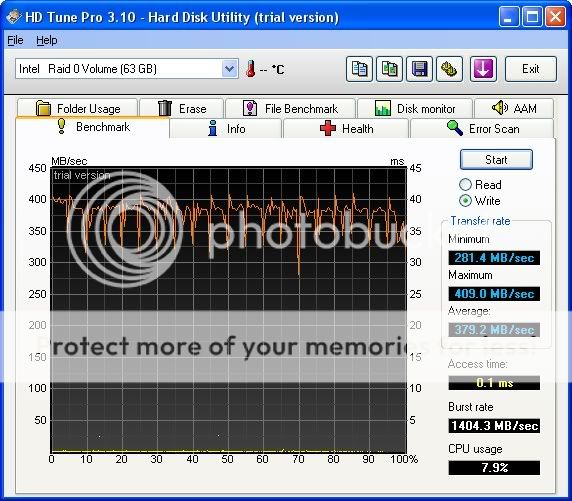

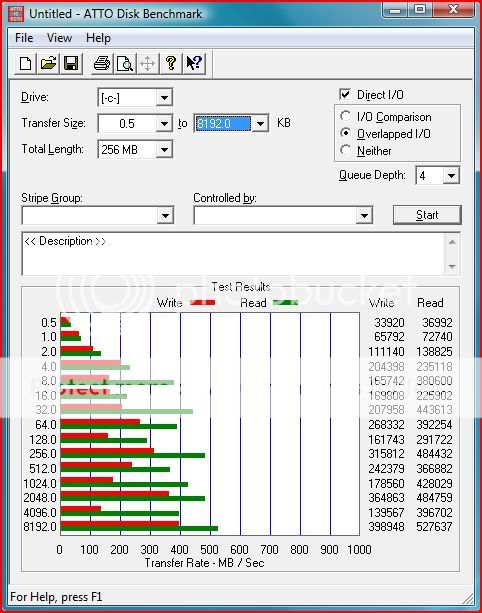

Intel X25-E (left) vs Intel X25-M (right) -- Writes

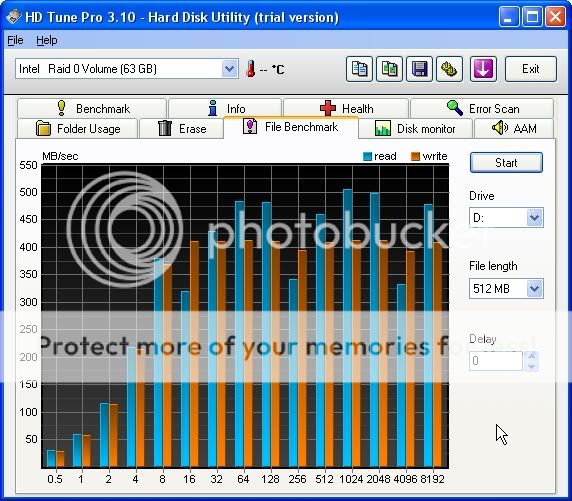

File Benchmark

Intel X25-E (left) vs Intel X25-M (right)

You can see the dramatic difference in write speeds!

At this point I questioned if changing the stripe size would make a significant difference. The common wisdom is that using a smaller stripe size would ensure that small files get split between the two drives, at the expense of longer access times. Well, since these are SSDs would it not make sense to use the smallest stripe size?

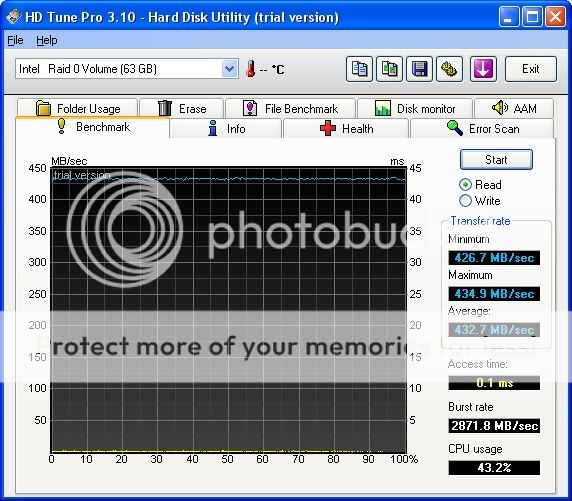

Intel X25-E, 4k stripe

Well, using a small stripe size does tend to smooth out the transfer rates, but check out the CPU usage! Obviously this is not optimal in terms of system usage. This small stripe size might be an option if I was using a hardware based RAID controller.

Through trial and error, I determined that a stripe size of 64K or 128K is optimal for my system. 64k gave me slightly higher CPU usage and a much better burst rate, while 128k gave me lower CPU usage and a slightly higher average transfer rate.

Intel X25-E, 64k stripe (left) vs 128k stripe (right)

I'll probably use the 64k stripe, just because of it's high burst rate (if indeed HD Tune is accurate). HD Tach shows a similar result.

ok moving on, if two Intel units are good, how about three?

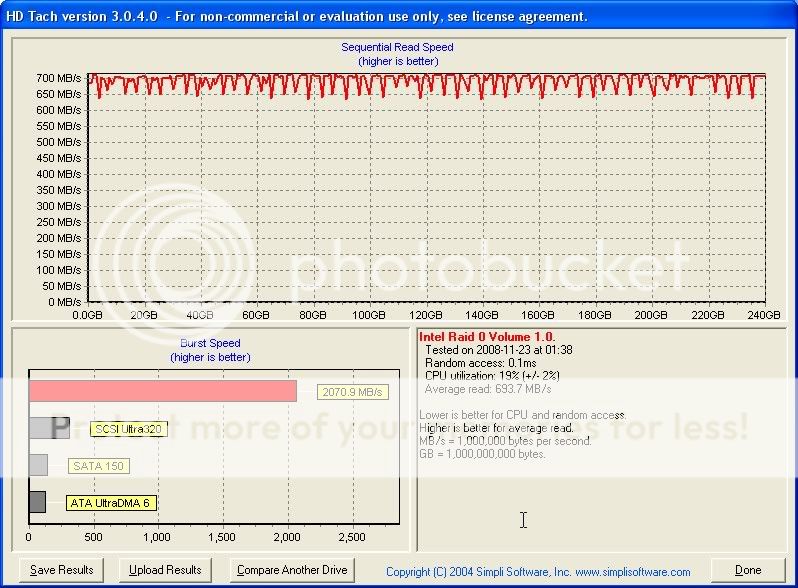

I used three X25-M units to make a Raid 0 volume (I didn't have a third E unit). The results are presented below:

Read

Write

I believe that the ICH9R has a throughput limit of 666 MB/Sec. The throughput of the above array might (or might not) be higher, but I think this is the max throughput. The reason I believe this to be the case is because I used all 5 Intel units (mix of E and M units) to create a single volume. While I realize this is suboptimal and probably would not be created for actual use, it should show increased read speeds.

But again we see max throughput at around 666 MB/Sec. Perhaps it's related to the mix of E and M drives, but I think it's a limitation of the on-board raid controller.

Well, that's all I have for today. Hope you found this data interesting. I'll be rebuilding my main rig with the X25-Es as the system volume soon. I'll report back if I see any signficant difference using the ICH10R vs the ICH9R.

One question to the storage experts out there, do you think going with a RAID card will signficantly improve performance? Also, do RAID cards behave properly with power management (in other words will my system be able to sleep and hibernate)? My previous experience with RAID cards were that they were designed for always on serves so power management was an issue.

Cheers!

Reply With Quote

Reply With Quote

i7 920@2.8

i7 920@2.8  X3220@3.0

X3220@3.0 X3220@2.4

X3220@2.4  E8400@4.05

E8400@4.05  E6600@2.4

E6600@2.4

Bookmarks