Ovaj post je nonsens. Zašto su dečki iz plavog tabora moram bash zeleno kampa tako zlobno. Postavljaju li se oni bojati povratka na P4 dana još uvijek čak i sa novim arhitekture na raspolaganju?!

Enough with this!

Ovaj post je nonsens. Zašto su dečki iz plavog tabora moram bash zeleno kampa tako zlobno. Postavljaju li se oni bojati povratka na P4 dana još uvijek čak i sa novim arhitekture na raspolaganju?!

Enough with this!

I do not think that this is "amazing result", as istanbul has 50% more peak FLOPS then the same-clocked Xeon. You may recall that the Pentium 4 also has more peak FLOPS than equivalent opteron (but not the same-clocked). It is surprising that with such superiority in the peak FP performance 6-core Opteron manages to lose in many computational applications to same-clocked Xeon (like 3DMax). Incidentally, the latest data from top500 shows that number of Intel systems was increased from 368 to 393 (not including Itanium), and the number of AMD systems reduce from 61 to 43.

SweClockers.com

CPU: Phenom II X4 955BE

Clock: 4200MHz 1.4375v

Memory: Dominator GT 2x2GB 1600MHz 6-6-6-20 1.65v

Motherboard: ASUS Crosshair IV Formula

GPU: HD 5770

And that's where objectivity went out the window. Configuring systems with different ram sizes to benchmark an app. as ram/problem size sensitive as linpack in order to compensate for whatever perceived advantage of the lesser core cpu is nothing, but a joke. Architectural strengths? The Nehalem system is capable of using more than 12GB of ram, hell they should load 24GB of ram on both systems and address Istanbul's bandwidth problems that way, not by reducing Nehalem's total ram to 12GB compared to 16GB for the Istanbul system.

Real world situation? You're joking right? If you buy the 2435, you get a DDR2 based system, that's real world, and that's what you pay for. Your competitors who pay for a DDR3 based X5550 won't be running their systems underclocked to please you.

For those of you who don't know, problem size is dictated by ram size, and this is crucial to actual peak gigaflops produced in each test. I'm not a professional and even I know this?So a 33% ram size/problem size advantage should produce better gigaflops, and the 6 cores of the 2435 did take advantage of that.

Besides the ram size/problem size discrepancy, the X5550 would appear to me to be bandwidth starved; 12GB for 8 bloomfield cores in an app as efficiently coded as linpack is nothing but retarded.You need at least 24GB of ram to feed those cores. I hate testing methodologies which cripple one hardware in order "compensate" for perceived weaknesses of another, unfortunately, that's what this benchmark is. It is very very far from real world.

Nice to find Linpack is useful for something at least! But still, and this is the main problem here, the test is biased and doesn't represent what you'll get when you buy any of these setups. Anybody with 2 or 3 runs of Linpack knows this, and I bet the OP has many, many more. I think is very clear in Zucker2k's post.

It's useful to show how bad perfomance per core is on the AMD system

Friends shouldn't let friends use Windows 7 until Microsoft fixes Windows Explorer (link)

Look, I'm not flaming anyone, tried to calm one guy down.

It is as plain simple as it get. You test for theory when you test clock for clock or you test all out for absolute performance. You don't mix both or optimize one, that's the essence of BIAS in the first place.

Engineer? His or her occupation as NOTHING to do with being biased or even the reason for them to be BIASED. Hell, maybe sales of their AMD products are slow, I DON'T know why some folks do what they do. Rahul Sood is a very smart guy, sold Intel and AMD products and yet, do a search of this forum for Rahul is an AMD fanboy and see what happens. Let's not forget that he was one of the folks calling Conroe's first test FAKED. Defending Fugger and the Gang here started me on the road to being banned at HardOCP. Then the Cowards deleted the thread

I say again, it looked to me like 4 cores with no HT and a 100% usage of 6 cores with 16GB vs 4 cores and 12GB is weak testing IMHO! The result is moot if Intel won it or not, it wasn't consistent is all I'm saying. It's like racing cars in different classes then putting the wrong fuel in one.

QFT!Originally Posted by Zucker2k

Originally Posted by Movieman

Posted by duploxxx

I am sure JF is relaxed and smiling these days with there intended launch schedule. SNB Xeon servers on the other hand....

Posted by gallag

there yo go bringing intel into a amd thread again lol, if that was someone droping a dig at amd you would be crying like a girl.qft!

Sorry, i wasn't aware of your expertise in testing HPC's, and your familiarity with HPL testing. Would you mind defining how much the bloomfield was hampered by the ram deficit with 4(real cores) and 4 smt... even though it was shown in the thread smt hurts nehalem, not helps in this case? Higher ram size, but higher latency as well, as well as being bandwidth hamstringed in comparison. You are talking about this test as if it is a desktop app. I feel your out of your element here, and your "common" sense anecdotes are out of place as well. They could also, this might be hard for you to grasp, be configuring a single node of a cluster *see real world* and comparing those nodes clock for clock... but i m sure you understood that... ^^ lol.

" Business is Binary, your either a 1 or a 0, alive or dead." - Gary Winston ^^

Asus rampage III formula,i7 980xm, H70, Silverstone Ft02, Gigabyte Windforce 580 GTX SLI, Corsair AX1200, intel x-25m 160gb, 2 x OCZ vertex 2 180gb, hp zr30w, 12gb corsair vengeance

Rig 2

i7 980x ,h70, Antec Lanboy Air, Samsung md230x3 ,Saphhire 6970 Xfired, Antec ax1200w, x-25m 160gb, 2 x OCZ vertex 2 180gb,12gb Corsair Vengence MSI Big Bang Xpower

*begin rant*

you obviously didnt get the point of my post. GTFO.

i swear im tired of seeing this. just because 1 company is leading doesnt mean the other companies products are crap. stuff becomes crap when it dies or is 5 years old. why dont i call your i7 crap cause if your definition of crap is new tech. then thats exactly what it is, CRAP.

*end rant*

I'm sorry but this is just impossible. There's no way an AMD processor could win any benchmark against the Nehalem architecture clock for clock. The Nehalem is superior in every way and all tests that may show anything different has to be biased (but no benchmark where the Nehalem wins could EVER be biased).

Sigh.

Maybe. I don't know anything about servers so... But at the same time, if I had done that I'm pretty sure it would be the exact same guys defending the blog post as are critizing it now... It's not like we (the forum) haven't had this discussion before when Shanghai and Deneb launched and then later Nehalem Xeons and now Istanbul.

With the same AMD leaning guys being proved wrong but so what? Many of us were proven RIGHT after the first Conroe tests were verified. Many of US were proven right about Native Quad Core being marketing hype, that it would be slower than Intel MCM unless they improved the Core's IPC, 4 X 4, were also right about Barcelona, Deneb and Phenom in general. So please Martin, don't try to marginalize some of the criticisms.

A fellow that gets along with everyone here once said to a poster who had to eat crow. Not the exact words but went like; "Don't feel bad for being mislead, bad info in = bad info out". That's also what a Bad review is.

Originally Posted by Movieman

Posted by duploxxx

I am sure JF is relaxed and smiling these days with there intended launch schedule. SNB Xeon servers on the other hand....

Posted by gallag

there yo go bringing intel into a amd thread again lol, if that was someone droping a dig at amd you would be crying like a girl.qft!

Crunch with us, the XS WCG team

The XS WCG team needs your support.

A good project with good goals.

Come join us,get that warm fuzzy feeling that you've done something good for mankind.

I like to use a chinese proverb about this: Fool me once, shame on you. Fool me twice, shame on me.

Crunching for Comrades and the Common good of the People.

Are we suffering from amnesia already? What happened to adjustment of problem size to "compensate" for tri-channel? Tri-channel is a vital solution to address bandwidth needs for the very high ipc bloomfield cores. It is neither a gimmick, nor an accident. Your questions are rather interesting since they expose your lack of understanding about the hardware in use as well as the software in question. For your info, I know for fact that HT hurts linpack, that's why the lack of info on the hardware configuration is so misleading. This being made more obvious from how the tester fails to even acknowledge the significance of problem sizes in the software he was testing. Please!

If problem size makes no difference (erroneously, of course) in linpack, then why give 16GBs to the 2435 and adjust problem size to max? Why not set similar problem sizes? Why set a higher problem size in order to "compensate" for the higher bandwidth of the x5550? All you have to do is look at the problem size differences and ask yourself why is it so?

The justification for differing ram sizes should not be about trying to match bandwidths or anything, because everyone who knows anything about linpack, knows that higher ram size=higher gflops. It beats me how the tester can overlook this fact seeing that ram sizes affects the max problem size which directly influences gflops.

You can choose to believe what you want. I have used linpack, it calculates gflops. It doesn't work differently in server environments, and even if it does, it still doesn't make a difference because I have used on Core i7 too.

Originally Posted by Movieman

Posted by duploxxx

I am sure JF is relaxed and smiling these days with there intended launch schedule. SNB Xeon servers on the other hand....

Posted by gallag

there yo go bringing intel into a amd thread again lol, if that was someone droping a dig at amd you would be crying like a girl.qft!

Nah, I just don't buy it. There's always something wrong with a review whichever CPU/GPU/whatever comes out on top, it's just that some tend to scream when it's in one direction and others in the other direction. Example; HardOCP's review of Deneb. They were using slower and less RAM for the AMD system than the Intel one (C2Q) and some were complaining. Still the "other" side had to defend the review and say "but the results are similar to what others have shown so it shouldn't matter". Why not?

If it's the same here I wouldn't know. But I do know that when I read a thread title like this one, I'm sure I'll be reading some comments about the review/whatever being bad.

To Movieman; you say trust "us"(XS) instead of "them"(reviewers/bloggers). The only problem is that quite a few of "them" are a part of "us". And seeing how some behave here I'd rather trust some of "them" than a few of "us"

In the end it's all about credibility. If someone is consistently showing skewed results one way or another then that person loses credibility and that goes to reviewers and forum members alike. (And I'm sure you agree with me here.)

In this case it was about not stating some of the settings (HT on/off turbo on/off etc). Instead of ranting, why not just ask him and maybe try it yourselves (those of you who got the hardware to do so) to validate his results (or not).

Long post and drifting away from topic. I'll stop.

I m just going to ignore your insults, and apparent lack of understanding as to why N size was adjusted. The Value of N for the nehalem, was the original value set, IE the largest number for that platform before swapping occurs. The AMD system recieved a LARGER number to calculate the efficiency of the platforms array calculations, so the nubmer was increased to fill up the memory as much as possible, since it had 16GB vs 12gb. If i calculate 1million digits of pi, or a billion digits of pi, the scale is linear.. if the nehalem had 16gb of ram(offsetting the tri channel advantage) it would share the same problem size to fill up the ram to 100% efficiency, i m not sure what is so hard to understand mathematically about this? They chose the largest problem set for the ram size vs what the cores can handle..ie the best config to max out each processes performance, 12gb probably left idle time on some cores in this test, so they increased it to max out the processing, as nodes in an HPC are fully utilized.... that extra 4gb would have overshot the nehalems cores calculation ability, so with equal ram, and same problem size it probably would have scored lower. They are building nodes, the most efficient nehalem node for linpack is 12gb .. for amd its 16gb, they are not single systems, they are nodes... when you build an hpc, it would take X amount of intel nodes vs X amount of AMD nodes to reach a defined FLOP level, thats why they are interested in the best node configuration... and the triple channel set up was to take advantage of the latency bonus ddr3 has.. ?!

Is this that surprising, the scores.. I mean.. look, it has already been explained at least twice in the thread why AMD outscored nehalem..its because physical processors matter in HPL.

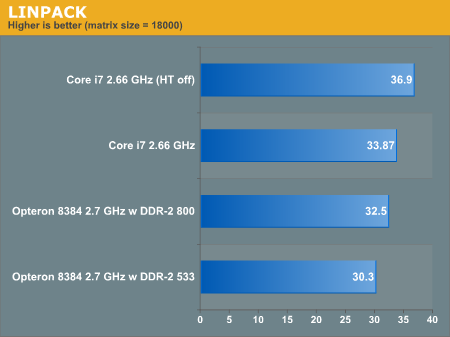

source Impact of problemsize over 30k is almost negligible

here is an i7 and shanghai with the same memory and same integer N... see how close they are... now see how the 4SMT threads actually slow performance, now this may be a stretch for you, as you seem not to understand whats going on.. but pretend you added a dual core to the amd score ontop the the quad thats there.. yes thats right.. as significant increase.. 6cores.. and with 6 cores it only has efficiency of 79%, the nehalem, with 4 cores reaches 86% efficiency.. so thats what i dont understand, nobody is saying that amd's ipc is all the sudden better than nehalems.. 7% deficit here still, but the way the platform is priced and assembled, cost wise that is impressive gains.

As for your gross generalizations... are you saying if i added 500gb of ram to an intel atom it would scale in gflops? give me a break. Anyone who knows anything about Linpack, which i m sure a HPC Server engineer does more so than us desktop overclockers... would probably account for that. Do you not understand they have no agenda.. as a company they want something that is the most cost efficient / profitable.. IE this equals them getting contracts. And in this case, for this specific type of calculation, it seems that creating nodes with 6core amd cpu's is the most efficient route. This does not mean all applications, the scope is only what is being tested here...

I will choose to believe people in the field, versus your anecdotal hearsay, and people in this thread who have a vast amount of knowledge about cpu's and are happily sharing, versus joing the flame party.

Last edited by villa1n; 06-30-2009 at 01:52 PM. Reason: clarity, sources.

" Business is Binary, your either a 1 or a 0, alive or dead." - Gary Winston ^^

Asus rampage III formula,i7 980xm, H70, Silverstone Ft02, Gigabyte Windforce 580 GTX SLI, Corsair AX1200, intel x-25m 160gb, 2 x OCZ vertex 2 180gb, hp zr30w, 12gb corsair vengeance

Rig 2

i7 980x ,h70, Antec Lanboy Air, Samsung md230x3 ,Saphhire 6970 Xfired, Antec ax1200w, x-25m 160gb, 2 x OCZ vertex 2 180gb,12gb Corsair Vengence MSI Big Bang Xpower

Since triple channel is supposed to be overkill for i7, why not run dual channel 8gb or 16gb in both systems?

Either way, for whatever reason, if they are giving different work unit sizes, it automatically renders this benchmark session dodgy.

Last edited by villa1n; 06-30-2009 at 06:37 PM. Reason: some people may have experience with the insults, please be kind, we re arguing points of view, not character foibles.

" Business is Binary, your either a 1 or a 0, alive or dead." - Gary Winston ^^

Asus rampage III formula,i7 980xm, H70, Silverstone Ft02, Gigabyte Windforce 580 GTX SLI, Corsair AX1200, intel x-25m 160gb, 2 x OCZ vertex 2 180gb, hp zr30w, 12gb corsair vengeance

Rig 2

i7 980x ,h70, Antec Lanboy Air, Samsung md230x3 ,Saphhire 6970 Xfired, Antec ax1200w, x-25m 160gb, 2 x OCZ vertex 2 180gb,12gb Corsair Vengence MSI Big Bang Xpower

1) I would recall you that MKL is highly architecture optimized. This is why Intel releases new ver. of MKL for each new CPU. This is why Anandetech test is pointless (since they used old version 9.1). Version 10.2 is much more effective for Nehalem. (over 90% efficiency for dual-socket server - see the last graph)

http://software.intel.com/sites/prod...mkl/charts.pdf

2) According to Intel HT can't really help this time:

http://www.intel.com/software/produc...sing_Intel_MKL

http://www.intel.com/software/produc...UG_linpack.htmTo achieve higher performance, you are recommended to set the number of threads to the number of real processors or physical cores.

The use of Hyper-Threading Technology.

Hyper-Threading Technology (HT Technology) is especially effective when each thread is performing different types of operations and when there are under-utilized resources on the processor. Intel MKL fits neither of these criteria as the threaded portions of the library execute at high efficiencies using most of the available resources and perform identical operations on each thread. You may obtain higher performance when using Intel MKL without HT Technology enabled. See Using Intel® MKL Parallelism for information on the default number of threads, changing this number, and other relevant details.

If you run with HT enabled, performance may be especially impacted if you run on fewer threads than physical cores. Moreover, if, for example, there are two threads to every physical core, the thread scheduler may assign two threads to some cores and ignore the other ones altogether. If you are using the OpenMP* library of the Intel Compiler, read the respective User Guide on how to best set the affinity to avoid this situation. For Intel MKL, you are recommended to set KMP_AFFINITY=granularity=fine,compact,1,0.

Bookmarks