Oh, thx. Though that wasn't what I was thinking about.

It'll be on the lines of:

How long will it take to compute 10+ billion digits of pi? (or some other constant)

Provided that you don't have the ram for it, it will rely entirely on disk speed and the size of your working memory. Hence why it will be biased towards machines with a lot of memory.

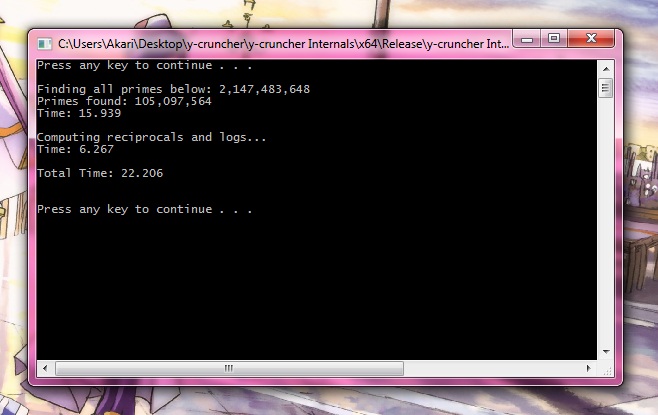

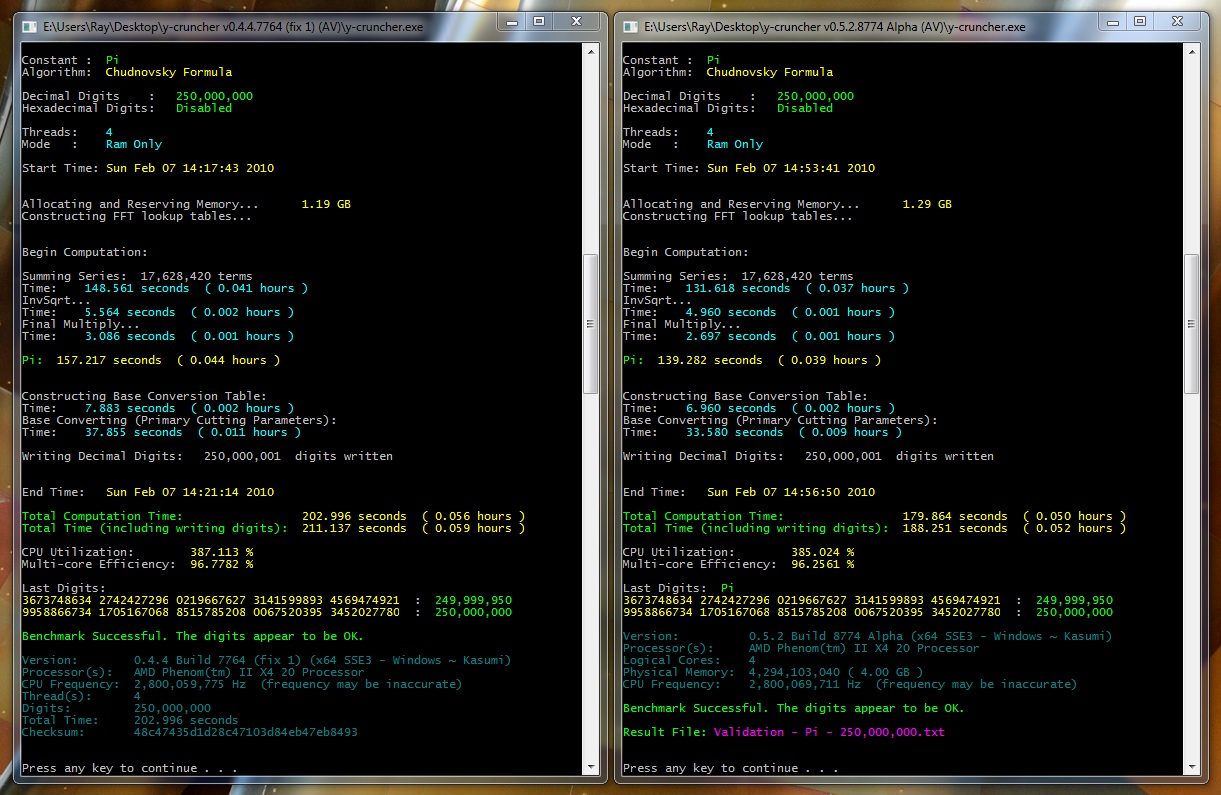

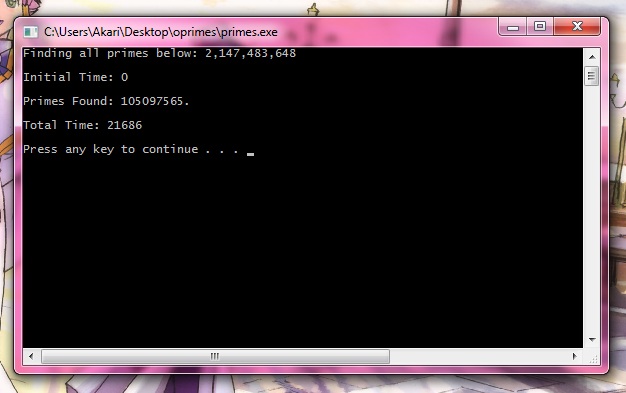

Obviously, a computation of such a size will take hours when done on disk (heck, it takes an hour and 40 minutes on my workstation - all in ram). But then again, there aren't any benchmarks that last more than a few minutes.

So it'll be interesting to present a longer-running benchmark... more like an endurance benchmark that'll stress CPU, memory, and disk.

So it definitely won't be for the impatient...

Making it suitable for competitive benchmarking will be tricky.

Since the very nature of this benchmark will heavily favor the multi-socket servers that have ton of memory and ton of expansion slots to fit in a ton of hard drives.

So a slower workstation that has enough memory to "ram-only compute" the entire computation or ram-drive all the disk accesses will beat an overclocked desktop anyday...

(well, it's not like multi-sockets haven't already been dominating this benchmark...

)

So the most important factors for getting a good time with this Pi-based HD benchmark will be (in descending order of importance):

- If you have enough memory to not need the disk, or to ram-drive all the IOs.

- The # of hard drives you have (and they won't need to be in raid). Sustained total disk bandwidth under various different sequential and and non-sequential access patterns will be what matters (though it'll be bottlenecked by the slowest HD).

- The amount of memory you have. The more memory you have, the less the program will need to access the disk.

- Finally, clock-speed and memory bandwidth...

Reply With Quote

Reply With Quote

)

)

Bookmarks